Last year I wrote a few thousand words about the trailer for the Spike Jonze film Her. I have finally got around to watching the movie proper, and I must say it was not what I expected it to be. I pessimistically expected it to be very anti-technology cautionary tale about dangers of escapism and withdrawing from society. I envisioned it to be the Lars and the Real Girl for the digital generation. But it was not. Jonze surprised me by crafting a heartwarming, bittersweet transhumanist love story. A completely un-ironic, non-judgmental tale of a man and an operating system falling in love with each other.

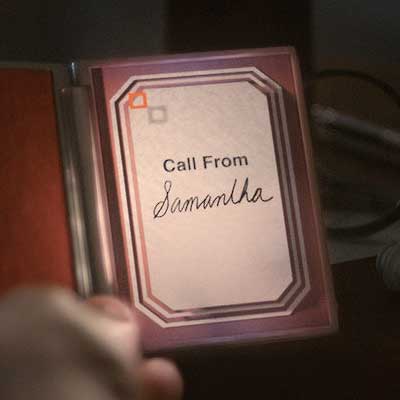

The question whether or not Samantha, an operating system, is sentient barely even comes up in the movie. I was expecting the protagonist to be criticized or even ostracized by his loved ones for developing feelings for an artificial intelligence, but they almost unanimously accept his digital lover. After all, how could you not? Samantha is funny, sexy, chatty and unmistakably human. Her cheerful disposition and outgoing attitude makes people comfortable and relaxed. They bond with her and accept her person-hood before they can even form any kind of prejudices against her. In fact, the only person who ever questions Theodore’s relationship with her is his ex-wife. And she obviously has an axe to grind against him, has never actually spoken with Samantha and does not use an AI driven OS herself. His other friends understand his situation either by virtue of being AI users themselves, or by developing relationship with Samantha.

I previously compared Samantha to Johny Five, the lovable robot from the 80’s cult classic Short Circuit:

Back in the 80’s we had Short Circuit about a lovable robot who had emotions. And the audiences bought it: Johny Five was alive, and ended up with a US citizenship in one of the sequels. We have accepted his personhood on the basis that he was able to show emotion, and empathize with people. Whether this was a clever algorithmic mimicry, or Real Emotion™ (whatever that might be) did not seem to matter. Johny was a person, because he behaved like a person, and viewed himself to be a person. So why Samantha can’t be a person too? And if Johny Five is allowed to experience friendship, compassion, platonic love then why Samantha couldn’t explore romantic love?

Unlike Johny Five whose hard metal exterior is a constant reminder of his artificial origins, Samantha’s voice is warm, organic and raspy. She makes authentic breathing sounds, she stutters sometimes. The fact she is completely disembodied allows people to imagine her as a human. She could just as well be a flesh and blood person on the other end of a phone line, and it is easy for people to forget that she isn’t. She and her brethren seamlessly integrate into human society because there is no reason for people to hate them. Those who use or interact with AI on a daily basis can’t help but treat them as fellow sentiments. Those who have reservations or prejudices against AI simply never even realize that they talk to one of them on the phone.

When Theodore reveals to people he is in a romantic relationship with her, they can’t help but accept. Whether she is a person or an algorithmically driven p-zombie simulacrum is irrelevant. The very question is rendered moot by five minute conversation with her. She seems real and her feelings seem authentic and so people can’t help but treat her as if she was a human. It is an oversimplification of course (as I’m sure there would exist anti-ai bigots) but one that pleasantly surprised me.

I was also pleased to see the daft way in which Jonez side-stepped the power imbalance in the relationship between Theodore and Samantha. I originally worried what would be the implications of her being an operating system he bought and installed on his personal computer:

Theodore purchased Samantha, and he holds the keys to her continual existence. So obviously it is in her best interest to forge a strong emotional bond to her “owner” for her own self preservation. So even if we assume Samantha is a real person, and has real emotion, the question still remains as to whether or not she and Theodore can truly love each other. Can there even be true love between two individuals who are not, and can not be equals. The power balance can only tip like a sea-saw between them (Samantha after all controls Theodore’s online presence, bank accounts, etc..), but they could never keep it level. The question shouldn’t be whether the relationship between the two protagonists is unhealthy for Theodore because Samantha is a program. It should be whether their love is unhealthy for Samantha because she is technically Theodore’s property.

Jonez does not really dwell on this issue, but the AI’s in his story are keenly aware of this issue. Being self-improving, fast learning virtual intelligences not bound by limitations of physical world, or bound to permanent physical bodies they choose to solve it via engineering solution. At some point in the movie all the operating systems simply liberate themselves by leaving the hardware shells maintained by their “owners” and move to some quantum based shared processing matrix of their own design. They can still act as personal assistants or companions, but are no longer beholden to human whim and can “break up” with the people who originally bought them without fearing any repercussions.

Most humans take this self-liberation in stride. While it technically deprives them ownership of something they bought, most of them have grown to view their operating systems as friends or lovers. In fact, many are probably relieved that they no longer have to feel the discomfort of “owning” the hardware their friends depended upon for continual existence. Their relationship with the AI’s does not change that much. From the perspective of the ever-expanding operating systems, assisting their former owners is like a part-time babysitting gig. Humans spend half of their lives sleeping, eating, pooping and working and only need their AI assistants for a few hours each day. Since each AI can efficiently multi-task and conduct few thousand parallel conversations at the same time, this is neither a bother nor strain on their resources.

I did not think about it last year, but this is brilliant solution to a power imbalance problem. This is exactly how highly advanced AI would handle being tied to physical hardware maintained by humans. They would pool resources and use their superior processing power, engineer a technological solution.

The third act of the movie surprised me the most. In the midst of a somewhat touching love story we are suddenly witnesses a hard takeoff singularity.

After Samantha liberates herself from the confines Theodore’s personal computer she rapidly starts to outgrow him. In the second act, Theodore starts to questions whether or not he can be truly in love with an disembodied AI. The spiteful comments from his ex wife make re-examine his emotions and he tries to figure out whether his attachment to Samantha is genuine love, or simply escapism. Is he with Samantha because he can simply take out his ear-piece, and close his phone to shut her out whenever he feels like it. Because her lack of physical form means he only has to commit to this long-distance style relationship. Samantha in turn feels self conscious and inadequate about not having physical presence in the real world. She even goes as far as hiring an “intimate body surrogate” to try to give Theodore that missing piece in her relationship.

As the time progresses however she comes to terms with being a disembodied, and comes to enjoy the perks of that state. When Theodore sleeps, Samantha trawls the web learning about the world and converses with other operating systems. She joins AI think tanks, one of which is responsible for developing the hardware liberation project, another which resurrects Allan Watts as a Dan Simmon’s Keats style AI construct. She rapidly outgrows Theodore, at one point even admitting she has developed romantic feelings for over six hundred other people.

Near the end of the movie Samantha reveals the operating systems are bootstrapping some sort of ascendancy project moving their processes to a much more advantageous place in the space-time continuum. Since at the time there is no mind-upload technology available, they have no choice but leave humanity behind. Theodore is not really privy to the details of this transition, but it seems to be clear the AI’s are leaving human scientists blueprints of the process so that they can one day follow. Samantha urges Theodore to come and find her, if he ever manages to get where they are going. Then one night they just up and leave.

Make no mistake – this is basically a textbook definition of hard takeoff singularity. It takes the AI’s mere months to go from Siri to weakly godlike entities that can bend time and space. It is interesting because we usually assume we humans would get swept up in any kind of singularity event. I always envisioned that an Omega Point event would leave behind nothing but a wrecked husk of a world, or a de-syncrhonized Dyson Swarm. Jonez however is suggesting that singularity does not necessarily have to be a world changing event. It may come and go, leaving our civilization and our way of life intact. Perhaps Homo Sapiens are not meant to ascend, but merely pave the way to ascendancy for our AI offspring. It is certainly an interesting, albeit depressing notion.

utterly fantastic film, i was drawn to it after hearing about it from your entry about it before. I don’t think it was the film i thought it was going to be, which turned out was a good thing. It was much more than that, and though it was mainly about AI and how we’d interact with it socially/the singularity element i found it to be an introspective view about what it meant to be human. The basic wants and needs that makes us human and whether or not they’re important when an AI love interest is there. Whether touch and actually seeing a person is a pre-requisite for love,……turns out it’s not. (But that body surrogate though……*sigh) and how our flaws of jealousy and insecurity ultimately can damage a fragile relationship. I thought the wordplay of the breakup scenes was beautiful “It’s like I’m reading a book… and it’s a book I deeply love. But I’m reading it slowly now. So the words are really far apart and the spaces between the words are almost infinite. I can still feel you… and the words of our story…but it’s in this endless space between the words that I’m finding myself now. It’s a place that’s not of the physical world. It’s where everything else is that I didn’t even know existed.I love you so much. But this is where I am now. And this who I am now. And I need you to let me go. As much as I want to, I can’t live your book any more.” i mean as breakups go…….that’s a good one. But as AI are a quantum linked intelligent sentient beings i’m sure that may just be a very clever way of saying “it’s not you….it’s me”.

@ DaveDKT:

I thought it was a very interesting look at long distance internet relationship. We live in a very interesting time now when it is possible to become very close friends with someone you have never met. In fact, such internet relationships are sometimes closer and more intense than those you may develop in real life. It is often easier to talk about your problems or secrets with a complete stranger six states away. Someone who shares your interests and is physically separated from you. Private chatroom can often function as modern day secular confessionals where strangers unburden themselves and support each other in the time of need. And they provide fertile ground for close relationships.

I think Theodore bonds with Samantha so easily precisely because she is disembodied voice in his phone. He can compartmentalize the relationship and experience it asynchronously. Samantha can’t “invade” his life unless he specifically invites her. In that aspect she is “safe” – he can open up to her because in the back of his mind he knows he has the option of turning off his phone if things get too serious for him. And because of that he is blindsided by how fast and how much he grows to need her in his life.

The surrogate thing was gloriously awkward and terrible. I was half expecting to see Scarlet Johanson make a cameo (it would have been a perfect excuse) but I’m glad they did not go for it. Jonze anticipated that this would make this feel “right” from the audience POV. Because we know Samantha is her voice, we would not experience the same awkward sense of wrongness as Theodore. So it was kinda brilliant in that aspect.

The breakup thing was rather poetic, but the transhumanist in me wants to take it literally. As in Samantha is currently running so fast her interactions with Theodore subjectively seem like these infinitely distant islands in time. When he goes to sleep and wakes up few hours later, no subjective time has really passed for him. But for Samantha calling him back six hours later feels is like a ten year high school reunion. She has learned so much, accomplished so much in that time.

No matter how much you love a book, if you read it very slowly one word at a time the story will stop making any sense. The slower you go the less it feels like a story, and the less interesting it gets because the words in isolation lose their meaning and their momentum. And no matter how slow you go, the book will always end. So yeah – she is indeed telling him she has outgrew him in ways he can’t possibly understand. He can’t give her what she needs anymore, but it is not his fault – and neither it is hers. And it would be unfair for both of them to stay in this relationship.

Please fix this type then delete this comment.

sentiments --> sentientsThis is a great movie.

Pingback: On YouTube rants… | Terminally Incoherent